Lessons for central bankers from the history of the Phillips Curve

Speech by Jürgen Stark, Member of the Executive Board of the ECBdelivered at the Conference “Understanding Inflation and the Implications for Monetary Policy: A Phillips Curve Retrospective”Cape Cod, 11 June 2008

It is a great pleasure for me to be here today at this conference.

I will try to look at the historical evolution of the Phillips curve and its interaction with macroeconomic outcomes from a slightly eccentric angle. Forty years ago it suddenly became very difficult even for navigated policymakers who had been raised in the revered intellectual tradition of monetary orthodoxy to resist the revolutionary notion of a stable trade-off between inflation and unemployment. Some rejected this notion – out of common sense and practical experience – and did it before scholars in universities could prove that it was theoretically fragile and empirically elusive.

I view this episode – in which honest civil servants were ahead of their times in rejecting faulty propositions – as highly symbolic. The episode is representative of the key but swinging and ambivalent relationship between cutting-edge economic research and long established central banking principles. At the end, I will submit few reflections on how that relationship ought to be governed in modern policy-making institutions and how the ECB — for its part — has insured against major policy failures that revolutionary ideas can inflict if applied untested.

1. The evolution of the Phillips curve: the interplay between ideas and macroeconomic outcomes

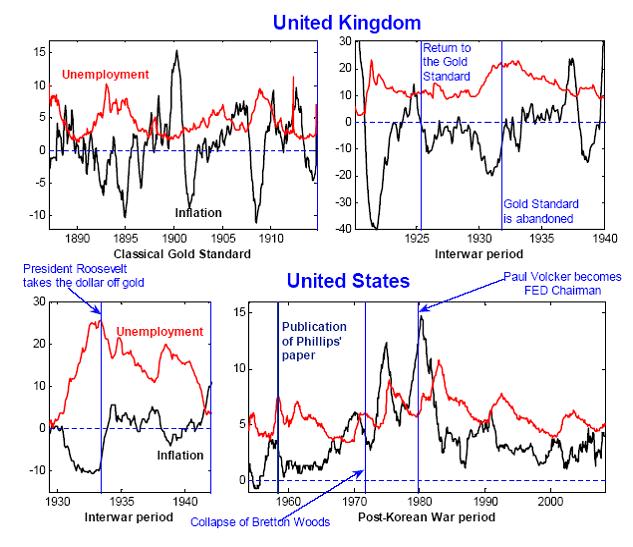

The two top panels of Figure 1 show the kind of evidence A.W. Phillips had available when he published his ground-breaking paper fifty years ago. [1] The two panels show the rate of unemployment and annual wholesale price inflation in the United Kingdom, during the Classical Gold Standard, and during the period between the two World Wars, respectively. In line with Phillips (1958), the clear impression emerging from the figure is that of a relatively stable relationship between the two variables, which is especially noteworthy when you consider that the second sample period comprises the Great Depression.

Just two years after the publication of Phillips’ paper, the eponymous curve was made the centrepiece of cutting-edge macro-economic thinking by two future Nobel prize winners, Paul Samuelson and Robert Solow. [2] Although Samuelson and Solow were careful in conjecturing that changes in inflation expectations might shift the unemployment-inflation trade-off upwards or downwards, such care was ultimately lost in the fast-growing industry of macro-analyses that was spawned by their article. Samuelson-Solow’s followers took the Phillips curve as literally offering a ‘menu’ of combinations of inflation and unemployment from which policymakers could choose at will—that is, an exploitable trade-off.

In February 1963, three years after the publication of Samuelson and Solow’s paper, Federal Reserve Chairman William McChesney Martin openly rejected the notion of an exploitable trade-off between inflation and unemployment in his statement before the Joint Economic Committee of the U.S. Congress. [3] Disapprovingly, he pointed out that:

‘[…] there was even serious discussion of the number of percentage points of inflation we might trade off for a percentage point increase in our growth rate. The underlying fallacy in this approach is that it assumes that we can concentrate on one major goal without considering collateral, and perhaps deleterious, side effects on other objectives.’

In December 1965, two years before Milton Friedman’s Presidential Address to the American Economic Association, which (re-)introduced the notion of the long-run vertical Phillips curve into macroeconomics, [4] Chairman Martin discussed the role of inflation expectations in shifting the unemployment-inflation equilibrium along the very same lines Friedman and Edmund Phelps [5] would then expound in analytical terms: [6]

‘If labor had had reason to fear a persistent substantial rise in the cost of living, it would have felt compelled to seek compensatory increases in wages; and if management had had reason to expect a general increase in the price level, it would not have felt compelled to resist such demands. In that case, wages would certainly have risen faster than productivity; prices would have been raised in consequence; and the feared inflationary spiral would have become actuality.’[7]

Subsequent developments are well known.

On the theoretical front, 1967 and 1968 saw the independent enunciation of the ‘natural rate hypothesis’, both in Friedman’s Presidential Address to the American Economic Association — which I mentioned already — and in the work that ultimately won Edmund Phelps the Nobel Prize. Underlying the natural rate hypothesis are two key concepts.

First, monetary neutrality: in the long run, the Phillips curve is perfectly vertical, which is another way of expressing Robert Lucas’s famous dictum that ‘You can’t buy a permanent economic high just by printing money.’

Second, temporary departures from the natural rate of unemployment can take place only to the extent that agents’ expectations are, for some reason, out of line with the economy’s authentic dynamics. When, in 1972, Robert Lucas applied to the Phillips curve the hypothesis of rational expectations, the notion of an exploitable trade-off was banished from frontier research.

Lucas (1972a), in particular, showed that the traditional ‘Solow-Tobin’ test of the natural rate hypothesis — based on the simple regression of inflation on lags of itself and on the rate of unemployment — was flawed in principle, as its results crucially depended on the nature of the monetary policy regime, and could therefore not be used to validate or invalidate the natural rate hypothesis.

Rerunning history is obviously impossible. It is my conviction, however, that these theoretical developments, by themselves, would most likely not have succeeded at displacing the old consensus of an exploitable trade-off with the swiftness we have seen in reality, if it had not been for a dramatic, concomitant real-world development: the Great Inflation.

Indeed, the Great Inflation episode was associated with the breakdown of empirical Phillips curves, as equations estimated over the previous, more stable period lost predictive power and had to be adjusted judgementally by forecasters in policy institutions.

The shifting nature of the unemployment-inflation relationship around the time of the Great Inflation is clearly apparent even to the naked eye from the bottom-right panel of Figure 1. Whereas from the second half of the 19th century up until mid-1960s inflation and unemployment had systematically negatively co-moved in both the United Kingdom and the United States, around the time of the Great Inflation they started to move upward in lockstep.

As pointed out by Lucas and Sargent in a paper presented in 1978 at a conference organised by the Boston FED, [8] the inflation of the 1970s represented, under this respect, a sort of large-scale experiment for the old consensus. For Lucas and Sargent the verdict of this experiment was clear: the ‘halcyon days’ [9] of post-Keynesian macroeconomics, with its belief in the exploitability of the Phillips trade-off, were gone for ever.

Over subsequent years, and until mid-1990s, the Phillips curve essentially disappeared from frontier research. Alienated by the disappointing performance of the Phillips curve, and following the lead of Kydland and Prescott, [10] frontier researchers were preoccupied with building flexible-price general equilibrium models driven by technological impulses. Within the new framework, there was indeed no place for a Phillips curve to speak of.

The revival of interest in the Phillips curve started in the 1990s, and was driven by two separate developments. From an empirical standpoint, work concentrated on the forecasting performance of ‘generalised Phillips curve models’ comprising, in lieu of unemployment, activity indicators extracted from dynamic factor analysis. Compared to traditional benchmarks, this line of research has shown that generalised Phillips curve models exhibit a remarkable improvement in forecasting ability. [11]

On the theoretical front, there was a growing realisation that the research program launched by Kydland and Prescott was facing fundamental problems. Notably, the absence of nominal rigidities in a real business cycle setting implied — among other things — that the new paradigm was fundamentally incapable to reproduce the key macroeconomic facts uncovered by the structural VAR literature: first and foremost the sluggish and drawn-out response of key variables such as inflation and output growth to monetary policy innovations. The DSGE research program, which started around mid-1990s, [12] was motivated by the need to reproduce key macroeconomic stylised facts within otherwise standard general equilibrium business cycle models. The introduction of various nominal and real frictions in such models has spawned a modelling effort and a flourishing of an econometric work which is, to this day, continuing unabated. The best testimony to the importance of this line of research is that DSGE models have breached the walls of academia, and are today being increasingly used at central banks as key input in the policy process.

A key building block of this class of models is the ‘New Keynesian Phillips curve’, which in its original incarnation relates current inflation to its expectation next period and to current real marginal cost. For several years this purely forward-looking Phillips curve specification has been regarded as producing implications for inflation dynamics that are at odds with the high inflation persistence that is typical of most of the post-WWII era. Several solutions have been proposed to introduce persistence in the model-implied time process for inflation, the best known being the idea that prices that are not reset optimally each period are somehow ‘indexed’ to past inflation. [13] Formulations along these lines suffer however from several problems.

First, except in labour markets, there is little evidence in our economies documenting price-indexing practices of the type described by theory.

Second, and fundamentally, even conceding that such practices might be wide-spread in high-inflation regimes, it is hard to believe that they would survive under more stable monetary conditions. [14]

And recent research, indeed, shows that the backward-looking Phillips curve component disappears under stable monetary regimes, i.e. under regimes in which inflation is strongly mean-reverting around the quantitative definition of price stability entertained by the central bank. [15] Further, once controlling for fluctuations in trend inflation, the Phillips curve appears indeed to be purely forward-looking. [16] Although this line of research is very recent, and its results ought therefore necessarily to be regarded as tentative, these findings reinforce the notion of the fundamental power of monetary institutions in permeating economic behaviours profoundly, direct expectations and — ultimately — determine the intimate fabric of market economies.

The history of the Phillips curve that I just briefly summarised suggests to me several reflections. The fact that prominent central bankers wedded to classical principle of monetary neutrality were ultimately vindicated bears a degree of significance that transcends the confines of central banking chronicles. I view this episode as a highly symbolic manifestation of a fundamental problem that central bankers continually face: namely, how to govern the relationship between policy-making and cutting-edge economic research. Let me turn to this point next.

2. The relationship between economic research and economic policymaking

Before a new medicine is launched on the market, it has to be extensively tested. Introducing new drugs on the market without extensive testing, and without further government vetting, would be considered a kind of social hazard that would undermine the very foundations of modern collective life. Beyond and after precautionary testing, the application of the medicine on large numbers of patients provides a source of ongoing information-gathering that expands knowledge of its healing properties. So, from time to time we learn that the Food and Drug Administration interrupts the sale of drugs that have been on the market for years, on the grounds provided by new evidence concerning previously unknown deleterious side effects. [17]

Compared with medical research, economics — and notably the economics and econometrics of Phillips curve relationships — present the additional, insurmountable problem that new theories cannot even be tested before application. To be sure, history has offered a few ‘natural experiments’: for example, the hyperinflations that swept Europe after the First World War were a prominent collective test, whose lessons have been absorbed, and are today deeply entrenched into the collective psyche of many European peoples. But the fundamental irreproducibility of controlled experiments in social sciences remains. True, in recent years, a very limited number of theories in the field of social science have been subjected to experiments. And, a recent strand of macroeconomic literature [18] has even argued that in the presence of uncertainty about the structure of the economy monetary authorities should ‘experiment’, by running monetary policy in such a way as to create sufficient variation in the data. By standard econometric arguments, this will indeed allow better identification of the relevant, unknown deep parameters for future policy conduct.

When presented with this line of arguments, however, a policymaker’s instinctive reaction is that they cannot possibly be right.

First and foremost, any experimenting with the economy would inevitably and fatally run the risk of destabilising expectations. Indeed, the result that it is optimal to experiment with the economy was originally obtained within models in which the public played a purely passive role and the behaviour of the policymaker could not alter the public’s beliefs and expectations.

Second, when this assumption has recently been relaxed in models in which the public is allowed to learn from the behaviour of the policymaker, [19] the result was overturned. Optimal policy becomes distinctly conservative, in the sense that policymakers should be wary and respectful of the fundamental fragility of the macroeconomic equilibrium to any perturbation that policy experiments could introduce.

In a nutshell, the initial, radical result in favour of monetary policy experimentation has been reversed. The fragility of the earlier result — and the transience of many such results, in the history of the theory of economic policy, which had appeared rock-solid at first sight — is a memento to policy practitioners.

This, of course, poses an issue concerning the right balance between creativity and pragmatism in our policy-making institutions. Isn’t it the case that an excessive intellectual conservatism might end up leaving our policy-making institutions ‘behind the curve’, clinging to dead theories, and unable to cope with the new challenges of an ever-changing world?

To be sure, highly innovative ideas will not systematically be proven wrong: heliocentrism — a revolutionary paradigm when it was first expounded — finally and definitely displaced previously dominant geocentrism. We should be careful not to censor research just for the sake of intellectual conservatism. However, the intrinsically entrepreneurial nature of research, whatever the specific field of endeavour, means that the incidence of failure is bound to be high. Failure in economic policy means potential for economic damage.

A safe principle that has always worked in the past is that the introduction of new ideas and concepts into a central bank’s monetary policy framework should be evolutionary, as opposed to revolutionary. Historically, a typical example of this has been the introduction, towards the end of 1999, of an explicit numerical objective for inflation on the part of the Swiss National Bank (SNB). Since its foundation, in 1907, the SNB has been a bulwark against inflation, delivering an annual inflation rate that, over the entire century – excluding war years – was just 2.1 per cent on average. Further, back in the 1970s, around the time the Great Inflation plagued many countries, the SNB — together with the Bundesbank — ‘showed the way’ inflation could be resisted — amidst international turbulences — by appropriately restrictive policies. In no way the introduction of an explicit numerical objective for inflation did therefore radically alter its monetary policy framework: rather, by providing a ‘focal point’ for inflation expectations, the only goal of such innovation was to improve the framework’s performance, and to facilitate the central bank’s task within the framework.

This naturally leads me to the final part of my speech. In recent years, an amended Phillips curve — which shifts with the state of expectations — has been made the modelling centrepiece of innovative proposals to conduct monetary policy without explicit reference to monetary aggregates. Part of the academic community, in particular, has advised the European Central Bank to abandon the prominent role it assigns to a careful analysis of monetary aggregates. Based on my previous discussion, the key question then becomes: ‘Is such a radical extent of innovation justified’? I will offer my thoughts on this question in the remainder of my remarks.

3. Money and the Phillips curve based model of inflation

There is no role for monetary analysis in the model of inflation determination that is centred around the New-Keynesian Phillips curve. The announcement of an inflation target together with a promise to move the real interest rate sufficiently forcefully in response to deviations of observed inflation from target is a necessary and sufficient strategy for price stability in the model. The real shocks that buffet the economy will cause time variation in monetary aggregates, but such movements will be uninformative: real shocks will manifest themselves in other variables in the system as well, and those other variables will be sufficient statistics for the underlying state of the model economy.

Conversely, shocks originating in the money market are often un-modelled within the framework, because they are inconsequential. In the model, a monetary authority setting an interest rate as a function of inflation deviations from target will automatically offset those shocks and insulate the economy from their potentially malign influences on real variables and inflation. A Taylor rule can do the job and thus make monetary shocks completely uninteresting from a monetary policy stabilisation standpoint.

The question is whether a central bank can content itself with such a stylised representation of the economy. There are two issues.

First, can this model be trusted to get the facts right in the long run?

Second, can monetary shocks be ignored even at business cycle frequencies?

The first issue concerns the long-run behaviour of the model. In the long run, the model can replicate the statistical association between money growth and inflation. But it does not reproduce the lead-lag structure that links the two variables. The model assumes a given inflation target defined in numerical terms. By simulating the model, one can prove that — provided some mild conditions on the Taylor rule are satisfied — inflation will consistently fluctuate around the objective over the business cycle and money in the long term will grow at a rate consistent with the objective as well. Only exogenous innovations to the numerical objective will — after some time — result in lasting changes in inflation and, as a consequence, in persistent changes to money growth.

However, this lag structure implied by the model — where changes to the inflation objective precede changes in inflation, which in turn precede changes in money growth — is at odds with the empirical evidence. One extremely robust feature that is possible to detect on the statistical association between money growth and inflation across diverse monetary regimes and historical periods is that persistent changes in (trend) money growth precede—rather than follow—changes in trend inflation. This can be interpreted as money having a leading role in signalling future changes in inflation, and even an active role in determining such changes in the medium term. [20]

I extract one lesson from this observation. Money growth might indeed have something to do with making the price stability objective credible. In the model, credibility of announcements is not at stake. In reality, that credibility needs to be buttressed – in the eyes of the economic agents – by mutually reinforcing “strategic instruments”. I interpret the evidence as suggesting that money can indeed act as the second “strategic instrument,” on a par with a clear announcement of a quantitative definition of price stability, supporting that definition in the long run.

For what concerns the behaviour of the model at business cycle frequencies, as I mentioned already the model does not feature a money market or a financial sector. Consequently, it simply assumes away the shocks that, even in the short run, can originate in those sectors. This has two main implications: one on the demand for money side, the other on the side of liquidity and credit provision.

On the demand side, the model does not substantiate money’s role as the principal vehicle for settling transactions and providing a “store of value”, for example, in face of heightened uncertainty. However, it is precisely in such conditions that we see money play an active role as a primary attractor for investors and financial intermediaries alike in search for portfolio security and liquidity. In the mainstream model, all eventualities can be hedged in advance, so there is no need to maintain a liquid portfolio even in the face of major shocks. In reality, money can act as a primary determinant of portfolio allocation and spending decisions in conditions of major and sudden shifts in risk perceptions. By monitoring money and liquidity preferences a central bank acquires a first-rate indicator of the state of confidence.

The second implication works through the provision of liquidity. In the main-stream model there is no borrowing or lending activity. However, these “missing” activities are important, as we all realise when credit markets become dysfunctional. Shocks hitting, for example, financial intermediaries’ changing perceptions of credit and aggregate risk can drive variable wedges between the interest rate that the monetary authority can control and at which banks can borrow — in fact, the only interest rate that is defined in the model — and the interest rate at which banks lend, which is relevant for aggregate demand. Observation and empirical tests tell us that the latter spectrum of market rates, applying to borrowers with different degrees of creditworthiness, is linked in various ways to liquidity. But the absence of liquidity makes the mainstream model unable to provide relevant insights into the determination of credit spreads, of term premia and, in one word, of asset prices, which are the principal link in the chain of propagation of monetary policy through the economy.

The strategic assignment to expand our view beyond the real sector has been instrumental in developing two avenues of analysis that would have remained atrophic otherwise.

First, we can count today on a first-rated system of real-time monitoring of monetary facts which we can resort to instantly especially in times of emergency. The financial shocks which became apparent in August 2007 posed – and continue to pose – delicate interpretation issues: Is the soaring growth of credit that we observe yet another manifestation of market participants’ anxiety about future credit access? Is it due to forced re-intermediation? Or, rather, is it to be taken as a sign that the financial turbulence is not severe enough to dent firms’ and lenders’ confidence in the future prospects of our economy? If the first two cases were true, some monetary policy action would be warranted. If the last interpretation were true, however, the monetary policy implications would be different, possibly opposite. Monetary analysis has assisted us to interpret events that were crucial for the design of policy.

The second avenue of analysis that the monetary side of our strategy has encouraged is the macroeconomic modelling of the monetary sector. As a result, currently we can use large-scale estimated DSGE models with a developed credit market that permit informative simulation experiments around baseline forecasts. We exploit the active channels of transmissions from credit and money to activity and inflation which are built into these models to quantify more precisely the risks to the projections which one could associate with the financial turmoil. This would have been impossible if we had contented ourselves with economic models in which inflation and output move only because of innovations to consumption, investment, or cost-push forces.

In summary: I assign money two functions.

The first is that of a reinforcing strategic instrument that in the long term buttresses our determination to preserve price stability. It complements and reinforces our announcement of a quantitative definition of price stability. The ‘inflation objective’ term that appears in many version of the new Phillips curve is a proxy for central bank credibility. However, its connection with the deep strategic foundations of a central bank is what is missing from the model and it is what the ECB has tried to make firm and clear with its monetary pillar when our strategy was first announced in 1998.

The second function of money is that of a privileged monitoring device that can help explain facts and shocks even at a shorter horizon. These are shocks that originate in the market for funds and liquidity and can disturb from time to time the workings of the transmission of monetary policy. The mainstream model of inflation determination, organised around the new-Keynesian Phillips curve, is inhospitable to liquidity and risk considerations. But this is no reason for abandoning monetary analysis. It is a reason for refining the model and bringing it to a stage where it can provide help to central bankers in all the tasks — be it the anchoring of inflation expectations or the identification and deflection of monetary shocks – in which they daily engage.

5. Concluding remarks

After years of Keynesians/Monetarists controversies over the sources of inflation, a consensus has emerged and is now dominant in macroeconomic theory and practice: inflation is a monetary phenomenon. In the long term, monetary policy can only influence nominal variables. The consensus model centred around a reconstructed Phillips curve – unlike its infamous predecessor – grants no free lunch to policymakers. So, there is more than a sense in which we all can say that the new Phillips curve encapsulates fundamental tenets of prudent monetary policy-making: the inflation process is forward-looking and will inexorably and quickly incorporate and perpetuate any deviations from price stability, if and when central banks were to start experimenting with the economy.

But inflation formation is a complex phenomenon in which expectations interact with current and past shocks – to the cost structure of firms as well as to the monetary and financial fabric of the economy – in ways that a Phillips curve cannot fully account or describe. Failure to adopt an all-encompassing view of the inflation process will lay down the potential for economic damage.

The ultimate lesson that I draw from the vicissitudes of the Phillips curve in the half of a century behind us is that we should all beware of models that short-circuit the workings of a complex market economy in a single equation.

Thank you.

* * *

References

Benati, Luca (2008a), ‘Investigating Inflation Persistence Across Monetary Regimes’, Quarterly Journal of Economics, August 2008, forthcoming

Benati, L. (2008b), ‘Money, Inflation, and New Keynesian Models’, ECB Working Paper, forthcoming, Bernanke, Benjamin S. (2006), ‘The Benefits of Price Stability’, speech delivered at the Center for Economic Policy Studies and on the occasion of the Seventy-Fifth Anniversary of the Woodrow Wilson School of Public and International Affairs, Princeton University, Princeton, New Jersey, February 24, 2006

Bertocchi, Graziella, and Michael Spagat (1993), ‘Learning, Experimentation, and Monetary Policy’, Journal of Monetary Economics, 32, pp. 169-183

Christiano, Lawrence J., Martin Eichenbaum, and Charles Evans (2005), ‘Nominal Rigidities and the Dynamic Effects of a Shock to Monetary Policy’, Journal of Political Economy, 113(1), pp. 1-45

Cogley, Timothy W. and Argia Sbordone (2008), ‘Trend Inflation, Indexation, and inflation Persistence in the New Keynesian Phillips Curve’, American Economic Review, forthcoming

Ellison, Martin and Natacha Valla (2001), ‘Learning, Uncertainty and Central Bank Activism in an Economy with Strategic Interactions’, Journal of Monetary Economics, 48(1), 153-171.

Friedman, Milton (1968), “The Role of Monetary Policy”, American Economic Review, 58(1), 1-17

Hairault, Jean-Olivier, and Frank Portier (1993), ‘Money, New Keynesian Macroeconomics, and the Business Cycle’, European Economic Review, 37, 1533-1568

Kydland, F., and E. C. Prescott (1982). "Time to Build and Aggregate Fluctuations". Econometrica 50: 1345–1370.

Lucas, Robert E., Jr. and Thomas J. Sargent (1978), ‘After Keynesian Macroeconomics’, in After the Phillips Curve, Federal Reserve Bank of Boston, Conference Series No. 19: 49-72; reprinted in the Federal Reserve Bank of Minneapolis Quarterly Review, 3 (1979): 1-6.

Martin, W. McChesney, Jr. (1963), ‘Statement’ by Wm. McC. Martin, Jr., Chairman, Board of Governors of the Federal Reserve System, to the Joint Economic Committee, February 1, 1963 (available at the St. Louis FED website).

Martin, W. McChesney, Jr. (1965), ‘Statement’ by Wm. McC. Martin, Jr., Chairman, Board of Governors of the Federal Reserve System, to the Joint Economic Committee, December 13, 1965 (available at the St. Louis FED website).

Martin, W. McChesney, Jr. (1971), ‘Inflation: Enemy of Growth’, Summary of Remarks by William McChesney Martin, Jr. before the 24th Annual Meeting of the Institute of Newspaper Controllers & Finance Officers, New York, Hilton Hotel, New York City, October 25, 1971 (available at the St. Louis FED website).

Phelps, Edmund S. (1968), ‘Money-Wage Dynamics and Labor-Market Equilibrium’, Journal of Political Economy, Vol. 76, No. 4, Part 2: Issues in Monetary Research, 1967, (Jul. - Aug., 1968), pp. 678-711

Phillips, A W (1958). "The Relationship between Unemployment and the Rate of Change of Money Wages in the United Kingdom 1861-1957". Economica 25 (100): 283-299.

Rosal, Joao Mauricio, and Michael Spagat (2006), ‘Structural Uncertainty and Central Bank Conservatism: The Ignorant Should Shut Their Eyes’, mimeo, Royal Holloway College, University of London

Samuelson, Paul A. and Robert M. Solow, ‘Analytical Aspects of Anti-Inflation Policy’, The American Economic Review, Vol. 50, No. 2, Papers and Proceedings of the Seventy-second Annual Meeting of the American Economic Association (May, 1960)

Smets, Frank, and Rafael Wouters (2003), ‘An Estimated Stochastic Dynamic General Equilibrium Model of the Euro Area’, Journal of the European Economic Association, 1(5), 1123- 1175

Stock, James H., and Mark W. Watson (1999), ‘Forecasting Inflation’, Journal of Monetary Economics, 44, 293-335

Yun, Tack (1996), ‘Nominal Price Rigidity, Money Supply Endogeneity, and Business Cycles’, Journal of Monetary Economics, 37(2), 345-370

Woodford, Michael (2007), ‘Interpreting Inflation Persistence: Comments on the Conference on Quantitative Evidence on Price Determination’, Journal of Money, Credit, and Banking, 39(2), 203-211

Figure 1. Inflation and unemployment in the United Kingdom and the United States since the Gold Standard era

-

[1] In fact, Phillips’ (1958) paper was based on annual data, whereas the two top panels of Figure 1 are based on monthly data downloaded from the NBER Macro History Database on the web (see at: www.nber.org).

-

[2] See Samuelson and Solow (1960).

-

[3] See Martin (1963).

-

[4] See Friedman (1968). Although published in 1968, Friedman’s Presidential Address was delivered to the American Economic Association on December 29, 1967.

-

[5] See Phelps (1968).

-

[6] See Martin (1965).

-

[7] He later summarised his position on the issue in the following terms: ‘ My view of inflation is clear. To those who believe that full employment requires inflation, my answer is that unless inflation is restrained full employment is impossible.’ See Martin (1971).

-

[8] See Lucas and Sargent (1978).

-

[9] See Lucas and Sargent, cit.

-

[10] Kydland and Prescott (1982).

-

[11] See e.g. Stock and Watson (1999). Further, although, as the bottom-right panel of Figure 1 shows, the inflation-unemployment correlation clearly appears to have broken down around the time of the Great Inflation, as shown by King and Watson the relationship does not appear to have experienced major instabilities once one focuses upon the business-cycle frequencies, i.e. those relevant for monetary policy.

-

[12] See Hairault and Portier (1993) and Yun (1996).

-

[13] See Christiano, Eichenbaum, and Evans (2005), and Smets and Wouters (2003).

-

[14] See Woodford (2007).

-

[15] See Benati (2008a).

-

[16] See Cogley and Sbordone (2008). Trend inflation is, according to this line of research, what ultimately imparts inflation its persistence.

-

[17] There is a clear parallel between the risk of side effects of pharmaceutical means and Chairman Martin’s words on the ‘ collateral, and perhaps deleterious, side effects on other objectives’ in his testimony in front of the U.S. Congress in February 1963, when he flatly rejected the idea that it could be possible to permanently trade-off a few percentage points of unemployment for a few percentage points of inflation.

-

[18] See e.g. Bertocchi and Spagat (1993).

-

[19] See Ellison and Valla (2001) and Rosal and Spagat (2006).

-

[20] Benati (2008b) shows that, over the last two centuries, the low-frequency components of money growth and inflation have exhibited an extraordinarily close co-movement, with a consistent lead of trend money growth over trend inflation by 2-3 years, in the United States, the United Kingdom, Norway, Sweden, Australia, and Canada. He argues that the resilience of both features to dramatic changes in the monetary regime naturally suggests that they are both structural in the sense of Lucas (1976). Finally, he shows that traditional New Keynesian models in which money plays a purely residual role are incapable of replicating such a lead-lag structure, as they typically imply that money growth lags inflation, or is, in the best of cases, contemporaneous with it.

European Central Bank

Directorate General Communications

- Sonnemannstrasse 20

- 60314 Frankfurt am Main, Germany

- +49 69 1344 7455

- media@ecb.europa.eu

Reproduction is permitted provided that the source is acknowledged.

Media contacts